The Art of AI Communication: The Complete Guide to Prompt Engineering Mastery Cover.

In an era where Large Language Models (LLMs) like GPT-4, Claude 3.5, and Gemini are reshaping the global workforce, the ability to communicate with these systems is no longer a “niche” skill—it is the ultimate modern literacy. Have you ever wondered why some people get flawless, creative, and highly accurate results from AI while others struggle with generic or hallucinated responses? The difference lies in a single, transformative discipline. This Complete Guide to Prompt Engineering Mastery is designed to take you from basic interactions to sophisticated AI orchestration, ensuring you can command these digital intellects with surgical precision.

Prompt engineering is the art and science of refining inputs to elicit the highest quality outputs from AI models. As we move deeper into 2026, understanding the underlying mechanics of how these models process tokens and semantic relationships is vital. In this guide, we will explore the core frameworks, advanced strategies, and psychological nuances of effective prompting. Whether you are a developer, a business leader, or a creative professional, mastering this craft will fundamentally change your productivity trajectory.

The Evolution of AI Interaction and Prompt Engineering

Prompt engineering has evolved rapidly from simple “keyword-based” queries to complex “chain-of-thought” architectures. In the early days of generative AI, users treated chatbots like search engines. Today, we treat them like highly capable, yet occasionally literal-minded, interns. To achieve mastery, one must recognize that a prompt is not just a question; it is a structured set of instructions, context, and constraints.

The rise of “Prompt Engineering Mastery” signifies a shift in how we approach problem-solving. We are no longer limited by our technical execution skills, but rather by our ability to articulate intent. By mastering the linguistic nuances that trigger specific neural pathways in a model, you can automate workflows that previously took weeks in a matter of seconds.

The Core Framework of a Perfect Prompt

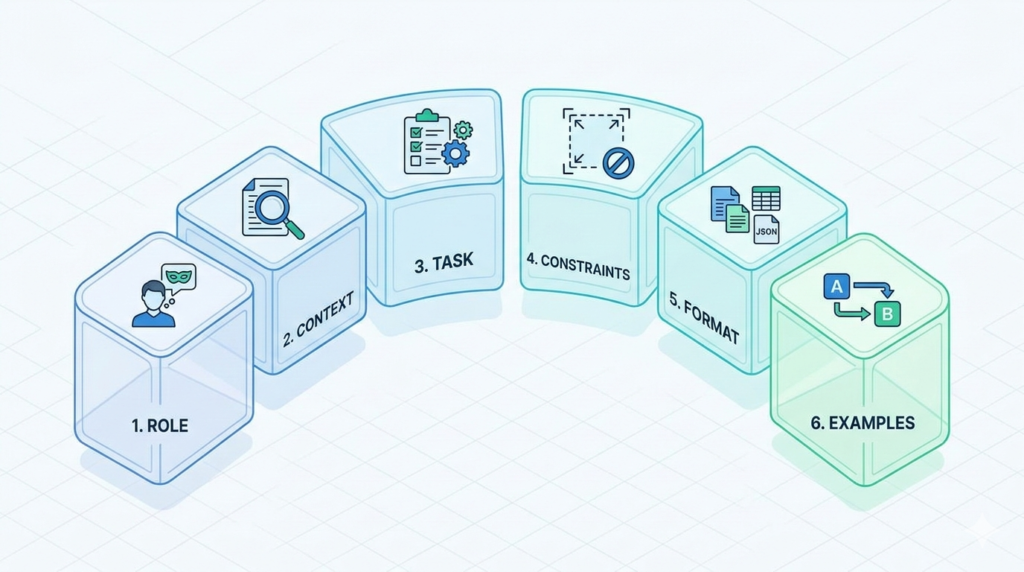

Figure 1: Six foundational building blocks of an effective AI prompt.

Before diving into complex techniques, every master must understand the foundational building blocks. A high-performing prompt typically consists of six essential elements. While not every prompt requires all six, the most effective ones usually leverage a combination of:

- Role: Defining who the AI should be (e.g., “Act as a Senior SEO Strategist”).

- Context: Providing the background information and the “why” behind the task.

- Task: The specific action you want the AI to perform.

- Constraints: Rules the AI must follow (e.g., “Do not use jargon,” “Limit to 500 words”).

- Format: The desired structure of the output (e.g., Markdown table, JSON, bullet points).

- Examples (Few-Shot): Providing 1-3 instances of what a “good” output looks like.

Defining the Role for Precision

When you assign a role, you narrow the model’s probability space. If you ask for legal advice as a “general assistant,” you get a generic disclaimer. If you ask as a “Harvard-educated Corporate Lawyer with 20 years of experience in M&A,” the tone, vocabulary, and structural focus of the response shift dramatically. This is the first step toward Prompt Engineering Mastery.

Contextual Anchoring

Context is the fuel for AI accuracy. Without it, the model fills in the gaps with its own training data, which often leads to hallucinations. Provide the “persona” of the audience, the goals of the project, and any relevant data points. The more the AI knows about the environment of the task, the less likely it is to drift off-target.

Advanced Strategies for Prompt Engineering Mastery

Once you have mastered the basics, it is time to implement advanced cognitive strategies that force the AI to “think” more deeply. These techniques are what separate the amateurs from the experts in the field of AI productivity.

Zero-Shot vs. Few-Shot Prompting

Zero-shot prompting is asking a question with no examples. It relies entirely on the model’s pre-existing knowledge. Few-shot prompting, however, involves giving the model a few examples of input-output pairs. This is incredibly powerful for maintaining brand voice or specific formatting requirements. For instance, if you want the AI to write product descriptions in a very specific “edgy” style, providing three examples of previous descriptions will yield a 90% better result than just describing the style with adjectives.

Chain-of-Thought (CoT) Prompting

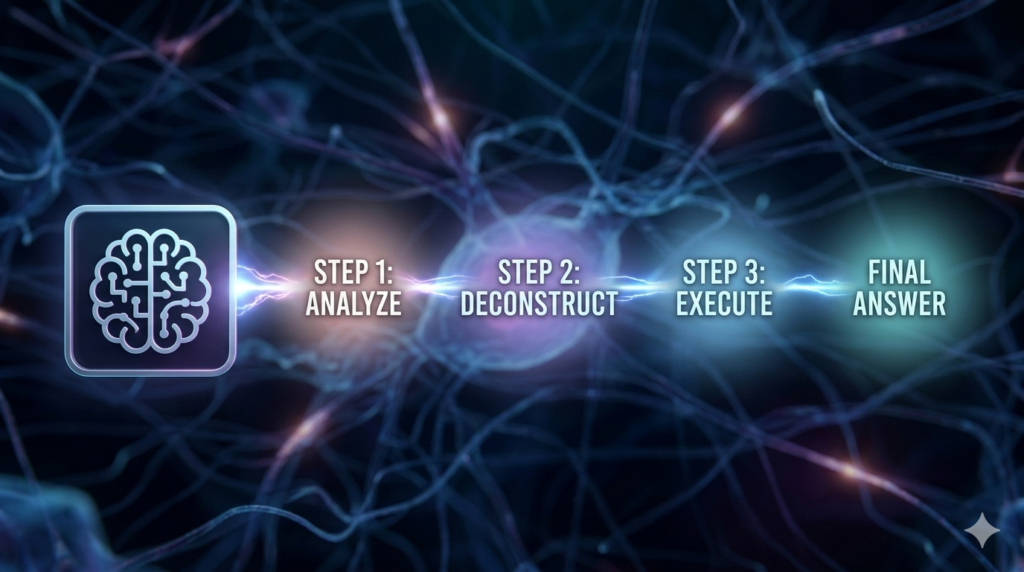

Figure 2: Activating the AI’s reasoning process using the Chain-of-Thought technique.

Chain-of-Thought is perhaps the most significant breakthrough in Prompt Engineering Mastery. By simply adding the phrase “Let’s think step-by-step,” you encourage the model to break down complex logic into smaller, manageable parts. This reduces errors in mathematical reasoning, coding, and strategic planning. CoT forces the AI to output its reasoning process before arriving at the final answer, which often corrects internal logic errors mid-stream.

Iterative Refinement and Feedback Loops

Mastery is rarely achieved in a single prompt. It is a conversational process. If the AI provides a response that is 80% correct, don’t start over. Instead, provide specific feedback: “The tone is perfect, but the third paragraph is too technical. Rewrite it for a 10th-grade reading level and add a real-world example.” This iterative loop is the hallmark of a professional prompt engineer.

Technical Nuances: Delimiters and System Instructions

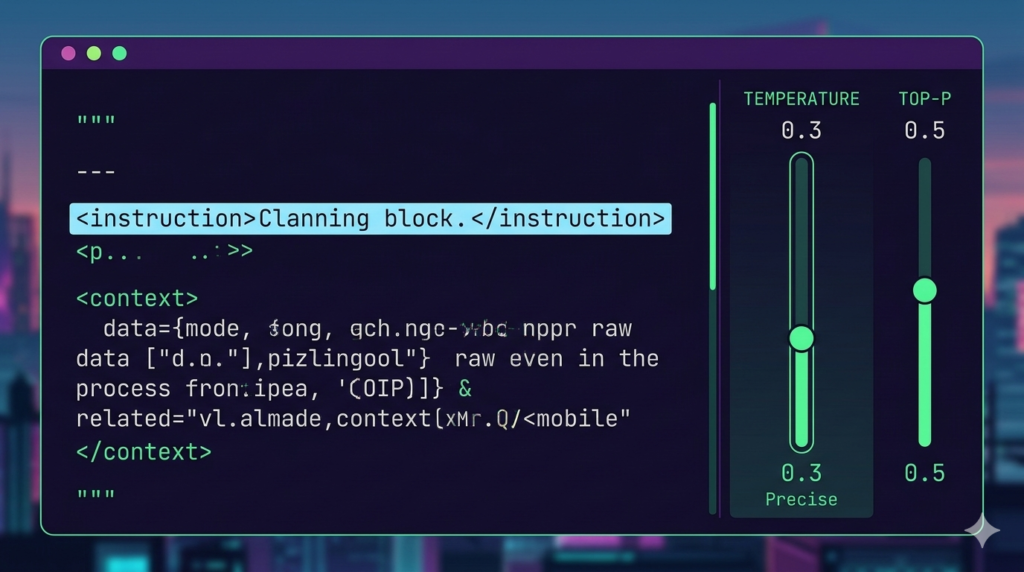

Technical control in prompt engineering: Delimiters and model parameters.

To guide an AI effectively, you must speak its “structural” language. Using delimiters like triple quotes (“””), XML tags (), or dashes (—) helps the model distinguish between your instructions and the data it needs to process.

Utilizing XML Tags for Clarity

Modern models, especially those from the Claude family, respond exceptionally well to XML-style tagging. For example:<instruction>Analyze the following text</instruction><text>[Insert Text Here]</text>

This prevents “instruction injection” where the AI confuses the content of the text with the commands you are giving it.

Temperature and Top-P Settings

While often overlooked in web interfaces, understanding Temperature is crucial for Prompt Engineering Mastery. Temperature controls randomness. A low temperature (0.1 – 0.3) makes the output predictable and focused, ideal for data extraction or coding. A high temperature (0.7 – 0.9) makes the output creative and diverse, perfect for brainstorming or creative writing.

The Psychology of Language in Prompting

AI models are trained on human language, which means they are sensitive to the “weight” of certain words. Using strong verbs, clear directives, and even “emotional” cues can sometimes improve performance.

- Positive Constraints: Instead of saying “Don’t write a long intro,” say “Keep the introduction under three sentences.” AI handles positive instructions better than negative ones.

- Emphasis: Words like “Critical,” “Essential,” and “Strictly” act as high-weight tokens that the model prioritizes during the generation process.

Applying Prompt Engineering Mastery to Business Workflows

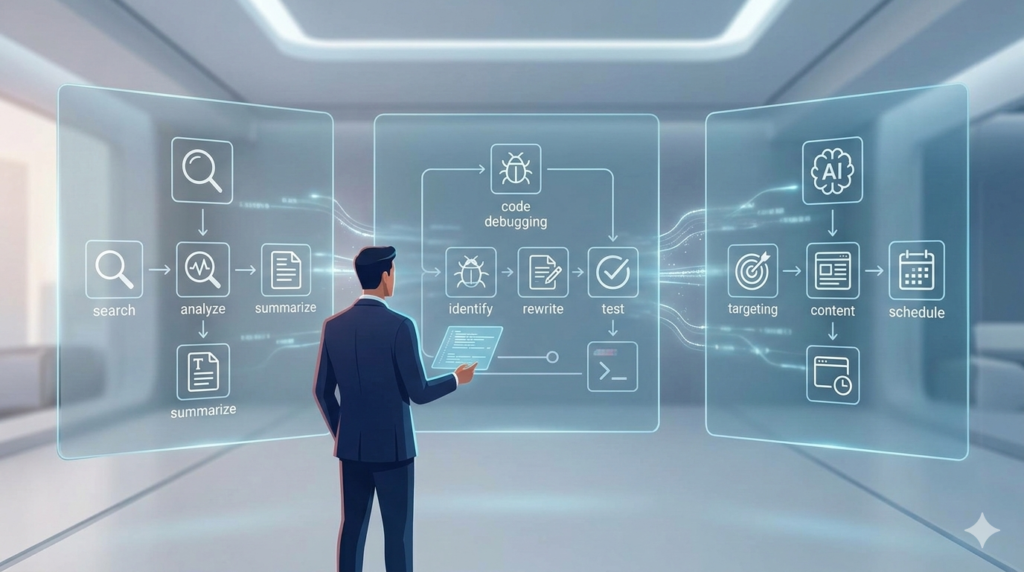

Application of prompt mastery in specific business and technical workflows.

How does this translate to the real world? Let’s look at three specific use cases where advanced prompting changes the game.

Scenario 1: Automated Content Research

Instead of asking “Summarize this article,” a master prompt would look like this:

“Act as a Research Analyst. Read the provided transcript and extract: 1) Key statistical claims, 2) Names of cited experts, and 3) Potential counter-arguments. Format the output as a Markdown table for my executive summary.”

Scenario 2: Complex Code Debugging

Instead of pasting an error message, use a “Self-Criticism” prompt:

“Examine this Python function for memory leaks. First, explain how the code currently handles memory. Second, identify three potential failure points. Third, rewrite the function to be more efficient. Finally, provide a test script to verify the fix.”

Scenario 3: AI-Driven Email Marketing

To master email prompting, you need to move beyond “Write a sales email.” You must define the “Pain Point,” the “Unique Value Proposition (UVP),” and the “Call to Action (CTA).” By feeding the AI customer testimonials and asking it to “Identify common emotional triggers and incorporate them into a 3-part email sequence,” you achieve a level of personalization that feels human.

Common Pitfalls and How to Avoid Them

Even those pursuing Prompt Engineering Mastery can fall into common traps. Recognizing these early will save you hours of frustration.

- The “Ambiguity” Trap: Using words like “some,” “brief,” or “professional” without defining them. What is “brief” to an AI might be 500 words. Always use quantitative constraints (e.g., “Max 50 words”).

- The “Kitchen Sink” Prompt: Putting too many unrelated tasks into one prompt. If you need a blog post, a social media caption, and an image prompt, do them one by one. Overloading the context window leads to “middle-of-the-prompt” forgetting.

- Ignoring the “System” Message: If you are using an API or an advanced interface, the System Message is the most powerful tool. It sets the “laws of physics” for the entire conversation. Use it to establish permanent constraints like “Always reply in Turkish” or “Never use emojis.”

The Future of Prompting: From Text to Agents

The future of AI orchestration: Transitioning from text prompts to autonomous agents.

As we look toward the horizon of 2026 and beyond, Prompt Engineering Mastery is shifting toward “Agentic Workflows.” We are no longer just prompting a chatbot; we are prompting “Agents” that can browse the web, execute code, and interact with other software.

In this new paradigm, your prompt becomes a “Goal Specification.” You aren’t telling the AI how to do every step; you are defining the outcome and the boundaries. Understanding the principles in this guide ensures that as AI becomes more autonomous, you remain the effective “Director” of the technology rather than just a passenger.

Tools of the Trade for Prompt Engineers

To truly excel, you should familiarize yourself with the ecosystem surrounding LLMs. Tools like LangChain, PromptLayer, and various “Prompt Libraries” can help you version-control and test your prompts. Testing a prompt across different models (GPT vs. Claude vs. Llama) is also a key part of the mastery process, as each model has a unique “personality” and sensitivity to specific phrasing.

Conclusion: Your Journey to AI Excellence

Mastering the art of communication with artificial intelligence is the single most valuable skill you can acquire in the digital age. By moving from simple queries to structured, context-rich, and iterative prompting, you unlock a level of productivity that was previously unimaginable. This Complete Guide to Prompt Engineering Mastery has provided you with the frameworks—Role, Context, Constraints, and Chain-of-Thought—needed to dominate this field.

Remember, the goal of a master prompt engineer is not just to get an answer, but to get the right answer with the least amount of friction. As AI models continue to evolve, your ability to articulate complex ideas into actionable instructions will remain your greatest competitive advantage. Start treating your prompts as code, your interactions as collaborations, and your creativity as the only limit.

Now, take these strategies, apply them to your daily workflows, and witness the exponential growth in your AI output quality. The future belongs to those who speak the language of the machines.

Frequently Asked Questions About Prompt Engineering Mastery

Q1: What is the most important element of a high-quality prompt?

The most important element of Prompt Engineering Mastery is Context. Without context, the AI is essentially “guessing” what you want based on statistical probabilities from its training data. By providing clear background information, defining the target audience, and stating the specific goal, you ground the AI’s response in reality. This reduces hallucinations and ensures the output is relevant to your specific needs. While Roles and Constraints are helpful, Context is the foundation upon which all successful AI interactions are built.

Q2: How does “Chain-of-Thought” prompting actually work?

Chain-of-Thought (CoT) prompting works by encouraging the model to generate intermediate reasoning steps before providing a final answer. This is often triggered by phrases like “Let’s think step-by-step.” In terms of neural network processing, this allows the model to “use more tokens” for the reasoning process, which prevents it from jumping to a premature—and often incorrect—conclusion. It is particularly effective for complex logic, math problems, and strategic planning where the process is just as important as the result.

Q3: Can I use the same prompt for GPT-4, Claude, and Gemini?

While many prompts are “cross-compatible,” true Prompt Engineering Mastery requires understanding that different models have different “sensitivities.” For example, Claude is exceptionally good at following long, complex XML-tagged instructions, while GPT-4 excels at concise logic and following strict formatting rules. Gemini often performs better with direct, data-heavy prompts. To get the best results, you should slightly tweak your prompts to play to the specific strengths of the model you are using at that moment.

Q4: What is “Few-Shot” prompting and why should I use it?

Few-shot prompting is the practice of providing the AI with a few examples of the desired input and output before asking it to perform a new task. For example, if you want the AI to format customer feedback into a specific JSON structure, you would show it 2 or 3 examples of “Feedback -> JSON.” This is far more effective than just describing the JSON structure in words. It provides a visual and structural template that the model can mimic, leading to much higher consistency and accuracy in the output.

Q5: How do I prevent the AI from “hallucinating” or making things up?

To minimize hallucinations and move toward Prompt Engineering Mastery, you should use “grounding” techniques. First, provide the source text or data you want the AI to use. Second, use a constraint like: “Base your answer only on the provided text. If the answer is not in the text, say ‘I do not know.'” Third, ask the AI to cite its sources or provide quotes from the text. These strategies force the model to look inward at the provided context rather than outward at its generalized (and sometimes inaccurate) training data.

Q6: Is Prompt Engineering a permanent career or a temporary skill?

While the “title” of Prompt Engineer might evolve, the underlying skill of AI Orchestration is permanent. As AI becomes more integrated into software (via agents and APIs), the need for humans who can precisely define goals, set boundaries, and debug AI logic will only grow. Even if “natural language” becomes better understood by machines, the ability to think structurally and communicate complex requirements will always be a high-value professional skill in any AI-driven economy.

Q7: What are delimiters and why are they used in prompts?

Delimiters are special characters or tags that help the AI distinguish between different parts of your prompt. Common delimiters include """, ---, ###, or <tags>. Using them is a core part of Prompt Engineering Mastery because it prevents “instruction confusion.” For instance, if you are asking the AI to summarize a report that contains instructions, the AI might get confused and start following the report’s instructions instead of yours. Delimiters clearly mark where your instructions end and the data begins.

Q8: How does the “Temperature” setting affect my results?

Temperature is a configuration parameter that determines the “randomness” of the AI’s output. A temperature of 0 makes the model deterministic—it will almost always choose the most likely next word, making it great for factual tasks. A temperature of 1.0 or higher makes the model more “creative” by allowing it to choose less likely words, which is better for poetry or brainstorming. Mastering the balance of temperature allows you to tailor the AI’s “personality” to the specific task at hand.

Q9: What is the “Role-Play” technique in prompting?

The Role-Play technique involves assigning a specific persona to the AI, such as “Act as a Senior Python Developer” or “Act as a specialized UX Researcher.” This is effective because it shifts the model’s internal probability weights toward a specific subset of its training data. It will use the jargon, tone, and problem-solving frameworks associated with that profession. This is a foundational strategy in Prompt Engineering Mastery for getting expert-level outputs instead of generic assistant-level responses.

Q10: How can I improve my prompts for creative writing?

For creative writing, avoid being too restrictive. Use a higher temperature (0.7-0.9) and focus your prompt on “Atmosphere,” “Tone,” and “Sensory Details.” Instead of saying “Write a story about a forest,” say “Write a noir-style opening scene set in a damp, bioluminescent forest, focusing on the sounds of the environment and the protagonist’s sense of isolation.” Providing a “vibe” or a specific “stylistic influence” (e.g., “in the style of Ernest Hemingway”) is also a powerful way to achieve mastery in creative AI outputs.

Q11: What is the “Negative Prompting” technique?

Negative prompting involves explicitly telling the AI what not to do. However, because LLMs sometimes struggle with the word “not,” it is often better to frame these as “Prohibited Actions.” For example, “Avoid using passive voice,” “Do not mention the company’s competitors,” or “Exclude any mention of pricing.” In Prompt Engineering Mastery, we often combine these into a “Constraints” section at the end of the prompt to ensure the AI has a final checklist of what to avoid before it finishes generating.

Q12: How do I handle very long documents with AI?

When dealing with long documents, you face the “Context Window” limit. To master this, use a “Map-Reduce” approach: ask the AI to summarize individual sections or chapters first, and then ask it to provide a final summary based on those individual summaries. Alternatively, use tools that leverage RAG (Retrieval-Augmented Generation) to “search” the document for relevant parts before prompting. This ensures the AI doesn’t lose focus or “forget” the beginning of the document as it reaches the end.

Pingback: The Top 10 AI Tools Every Freelancer Needs: Elevating Your Digital Career in 2026 - yourtasksai.com